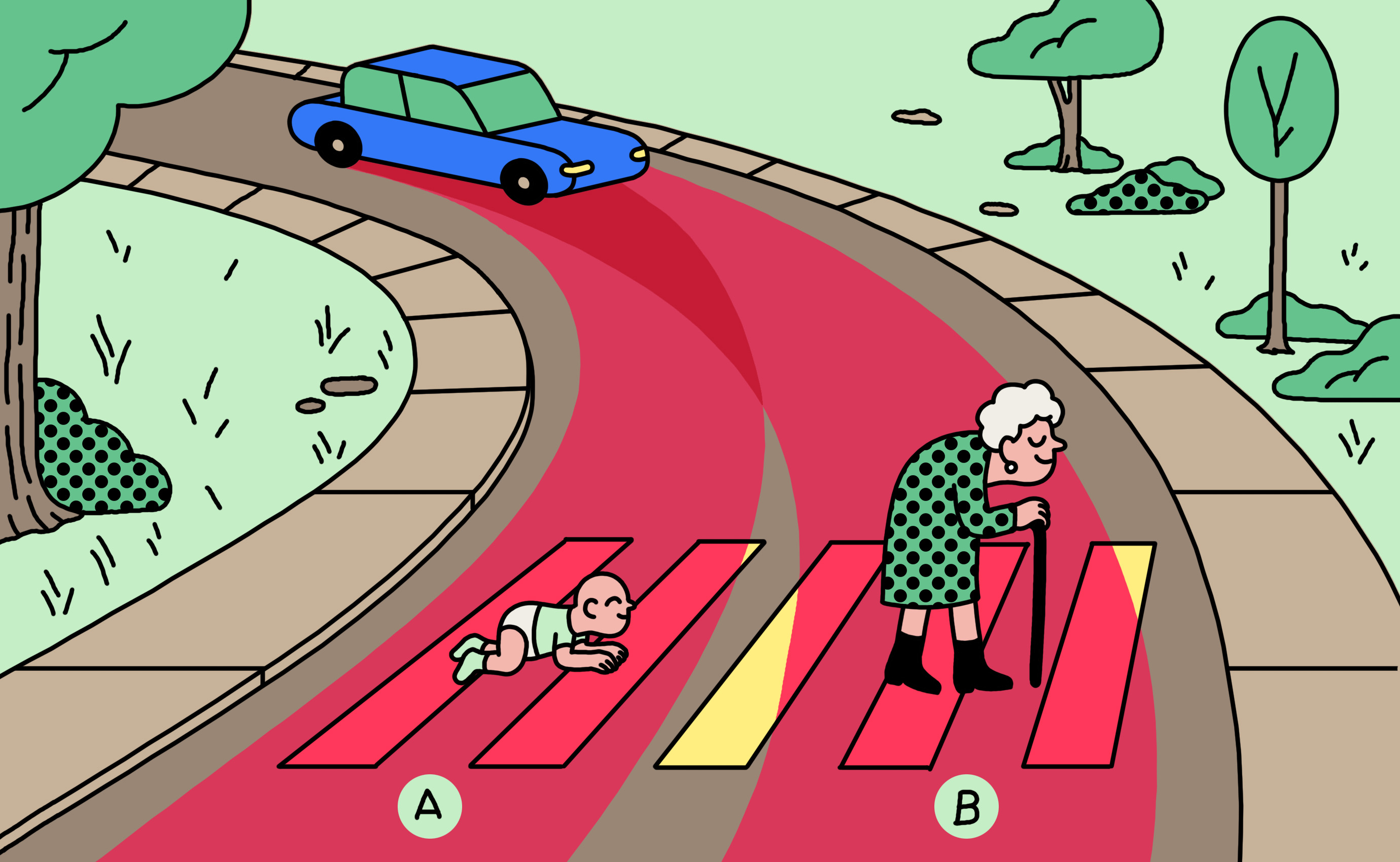

Who would sacrifice one person in order to save five? – Global differences when it comes to making moral decisions | Max Planck Institute for Human Development

Should a self-driving car kill the baby or the grandma? Depends on where you're from. | MIT Technology Review

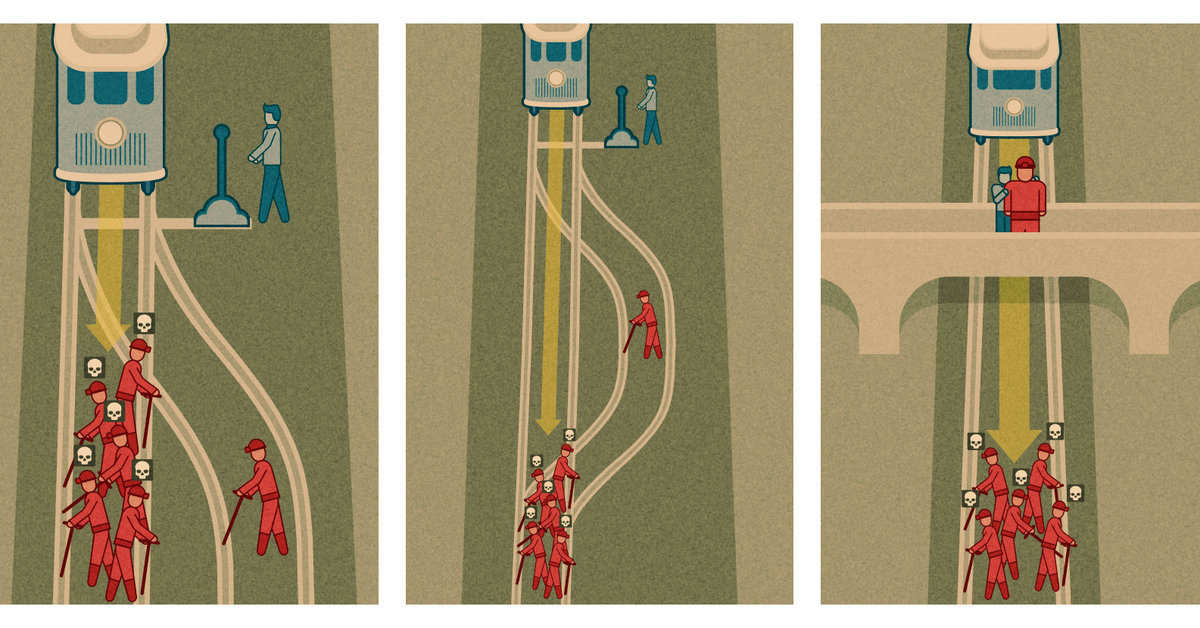

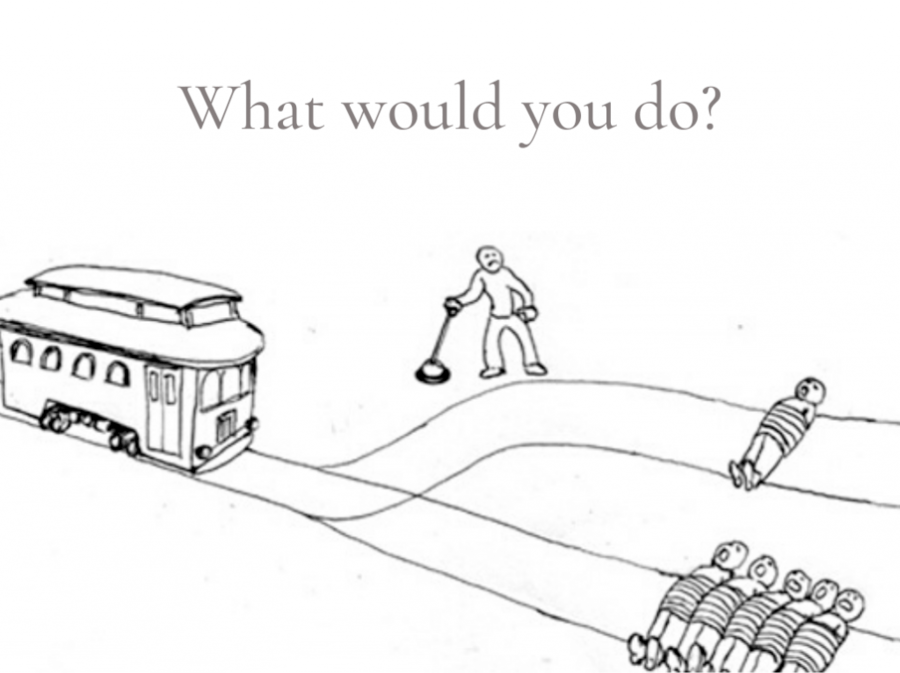

Michael Shermer on Twitter: "Trolley Problem test: subjects given chance to flip switch & divert train to side track to kill 1 worker & save 5. Only 2 of 7 did—opposite ratio

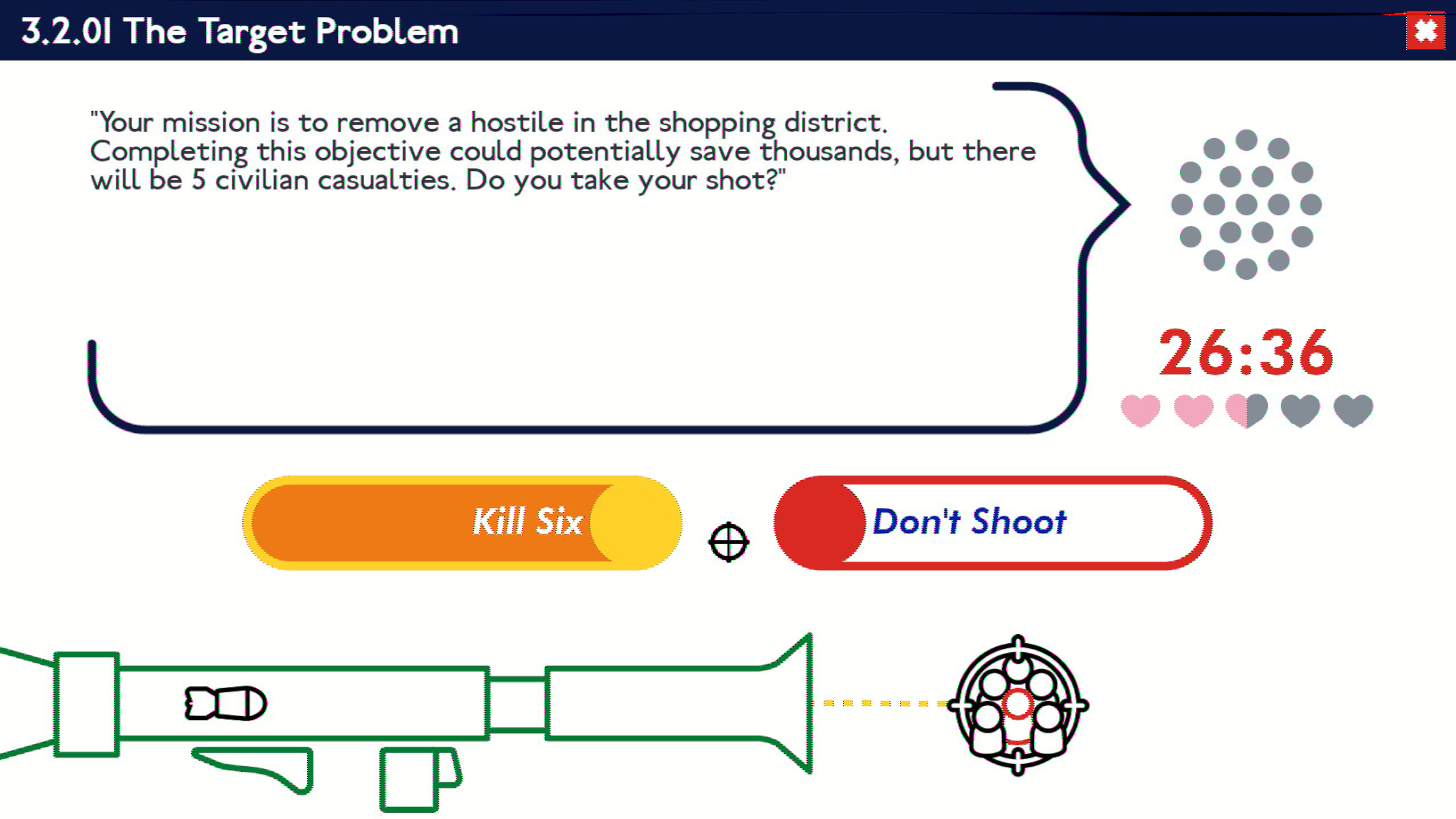

:no_upscale()/cdn.vox-cdn.com/uploads/chorus_asset/file/6912205/Screen%20Shot%202016-08-09%20at%2011.43.01%20AM.png)

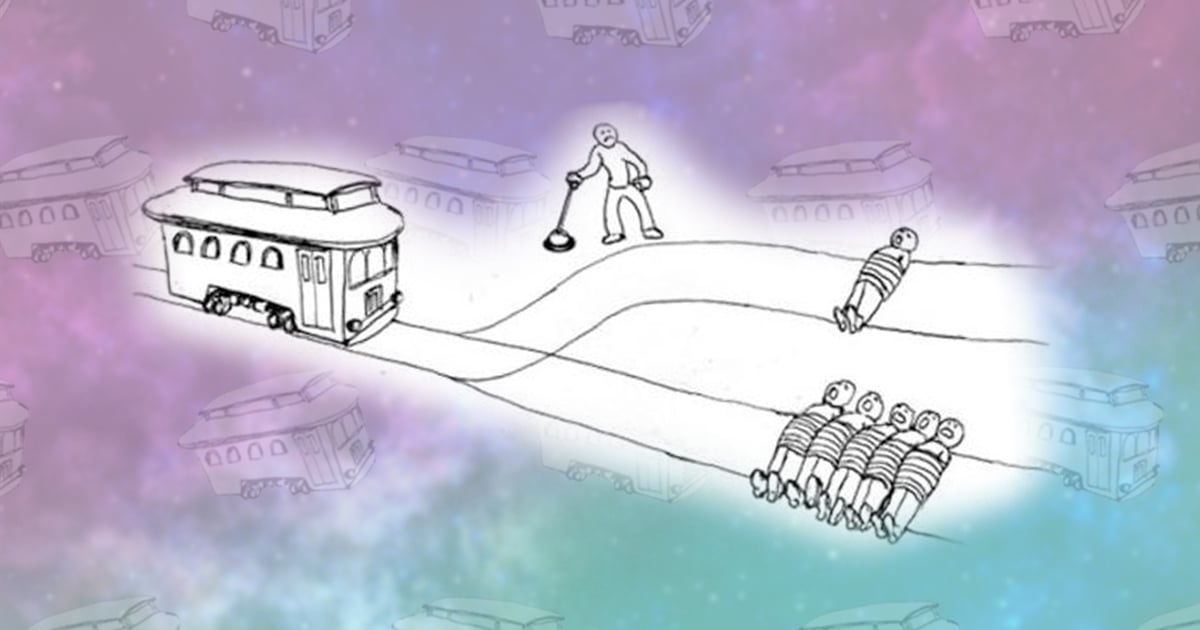

/cdn.vox-cdn.com/uploads/chorus_asset/file/13445196/Screen_Shot_2018_11_15_at_10.56.15_AM.png)